Objects and Pixels

Learning objectives of this topic

- Pixel-based vs. object-based remote sensing analysis

- Pixel assignment to form clusters/objects

- Why use object-oriented algorithms?

- Introduction to segmentation procedures

In this topic we will take a look at the difference between the analysis of single pixels and clusters of these image cells. While single pixels solely take into account the values which are related to the respective image cell, object-based analysis will quantify the statistics that are inherent to a sequence of, usually, neighboring cells.

One pixel, one class

Looking at the graphic above, we can see that every pixel has a unique value. Whether it is a digital number (DN) or a complex time series data-based quantity such as a multi-temporal standard deviation, we can use this value in order to classify the image cell and consequently assign it to its designated class.

Which class is chosen is subject to the method that is being used, whether it is based on manual definition or completely automated (e.g., an unsupervised classification or a deep learning based algorithm). Pixel-based analysis enables a detailed picture of the remote sensing data set we are looking at. While the research is able to exploit the data at maximum cell size, several important parameters are not taken into account, such as:

- The shape of objects or ‘targets’, which makes certain areas of interest (AOI) easily distinguishable, such as building or regularly shaped pivot irrigation systems.

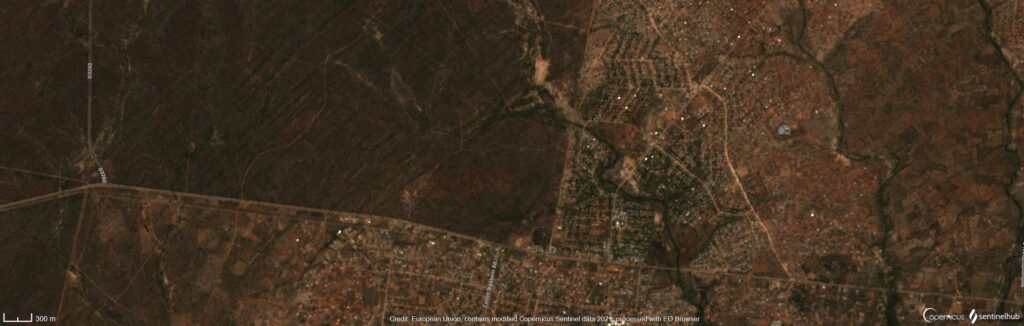

- The edges which define the transition from e.g., one land use type to a different one. Typical examples for this appearance are roads which cross through open countrysides while sharply separating two types of land use/cover as displayed in the image below.

- The neighborhood of pixels that comprises the statistics or cells that surround a certain pixel. Due to Tobler’s first law of geography (“Everything is related to everything else, but near things are more related than distant things.“), we can assume, that a pixel’s brightness, or whichever quantification it may be, is influenced by its surrounding to a certain degree.

Creating objects from pixels

Objects or ‘cluster’ as they are often referred to The algorithms which can be applied to object-oriented are pretty much identical to those used for pixel-oriented analysis approaches. However, object-oriented classifiers make use of two domains:

- Spectral characteristics (same like pixel-oriented approaches)

- Neighbourhood relations/statistics

The spatial patterns which are represented as the neighbourhood of a pixel provide extra information, which is not available on the pixel level and as explained earlier it is assumed, that the surroundings of a pixel are likely to resemble the respective image cell itself. If pixels are clustered together to form classification objects, they create coherent or homogenous areas with similar spectral and/or spatial patterns. Such clusters can be created using segmentation algorithms.

Segmentation – Bringing pixels together

Segments are clusters of pixels which are similar to each other in the domains mentioned earlier. The process to create these is called ‘segmentation’ and can be carried out using any commonly used software/programming language such as QuantumGIS, R, Python or GDAL. Today, it is commonly used in computer science and image analysis. The difference of a classification, a detection and finally a image segmentation is visualized below.

(a) The human eye can thematically separate different targets.

(b) Clusters are detected.

(c) Based on pixel clusters, a segmentation is carried out.

In essence, a segmentation produces similar results to what the human eye does all the time, detecting patterns and patches, that look similar for some reason in order to group them and make them visible as entire objects. For example, if you look at a field of grass next to a road, you will instantly group the grass as one and the paved road as the other object. One typical example of the use of segmentation is the identification of individual tree crowns, like in the image below. This case also showcases the use of pixel clustering to provide more meaningful (e.g., from the view of ecological research) information through the analysis of entities that can be attributed as a whole. Single pixels which only represent parts of a tree or that are a mixture of the surroundings are much harder to relate to the characteristics of a complete object.

Consequently, if the spectral proximity and the neighborhood criteria are matched, a segmentation will cluster pixels and create segments, usable for remote sensing analysis.

Sources & further reading

Elachi, C. & van Zyl, J. (2015²). Introduction to the Physics and Techniques of Remote Sensing. Hoboken, USA: John Wiley & Sons, Inc.

Facebook Research (2016). Learning to segment. <https://research.fb.com/blog/2016/08/learning-to-segment/>.

Jensen, J.R. (2007²). Remote Sensing of the Environment. An Earth Resource Perspective. Upper Saddle River, USA: Pearson Prentice Hall.

Phiri, D. & Morgenroth, J. (2017). Developments in Landsat Land Cover Classification Methods: A Review. In: Remote Sensing 9(9), 967. https://doi.org/10.3390/rs9090967.

Rees, W.G. (2010²). Physical Principles of Remote Sensing. Cambridge, USA: Cambridge University Press.

Schowengerdt, R.A. (2007³). Remote Sensing. Models and Methods for Image Processing. San Diego, USA: Academic Press.